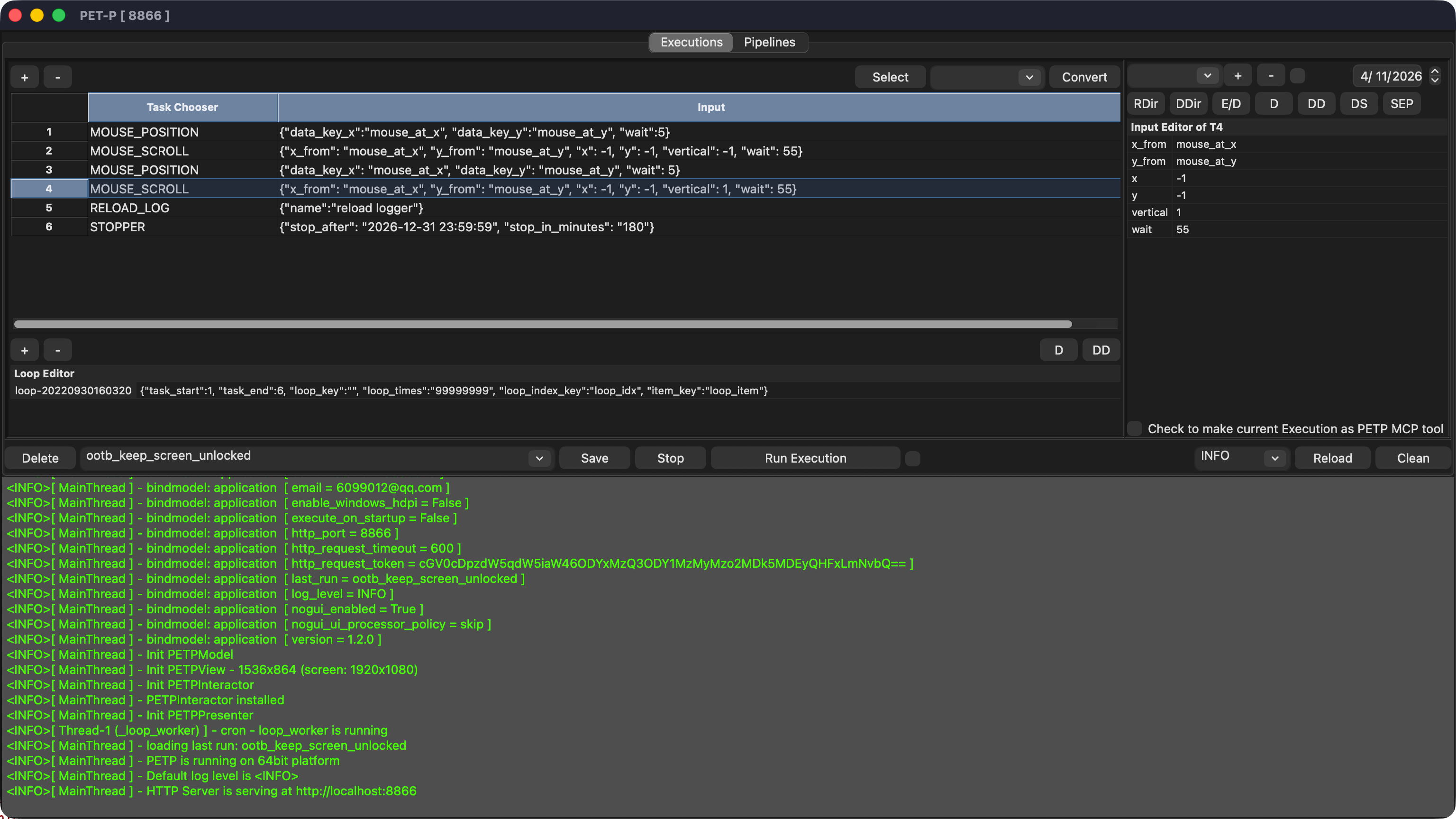

Flexible Orchestration

Chain any mix of processors in a single execution. Branch on LLM output, loop over lists, retry on error — all in YAML, no code required.

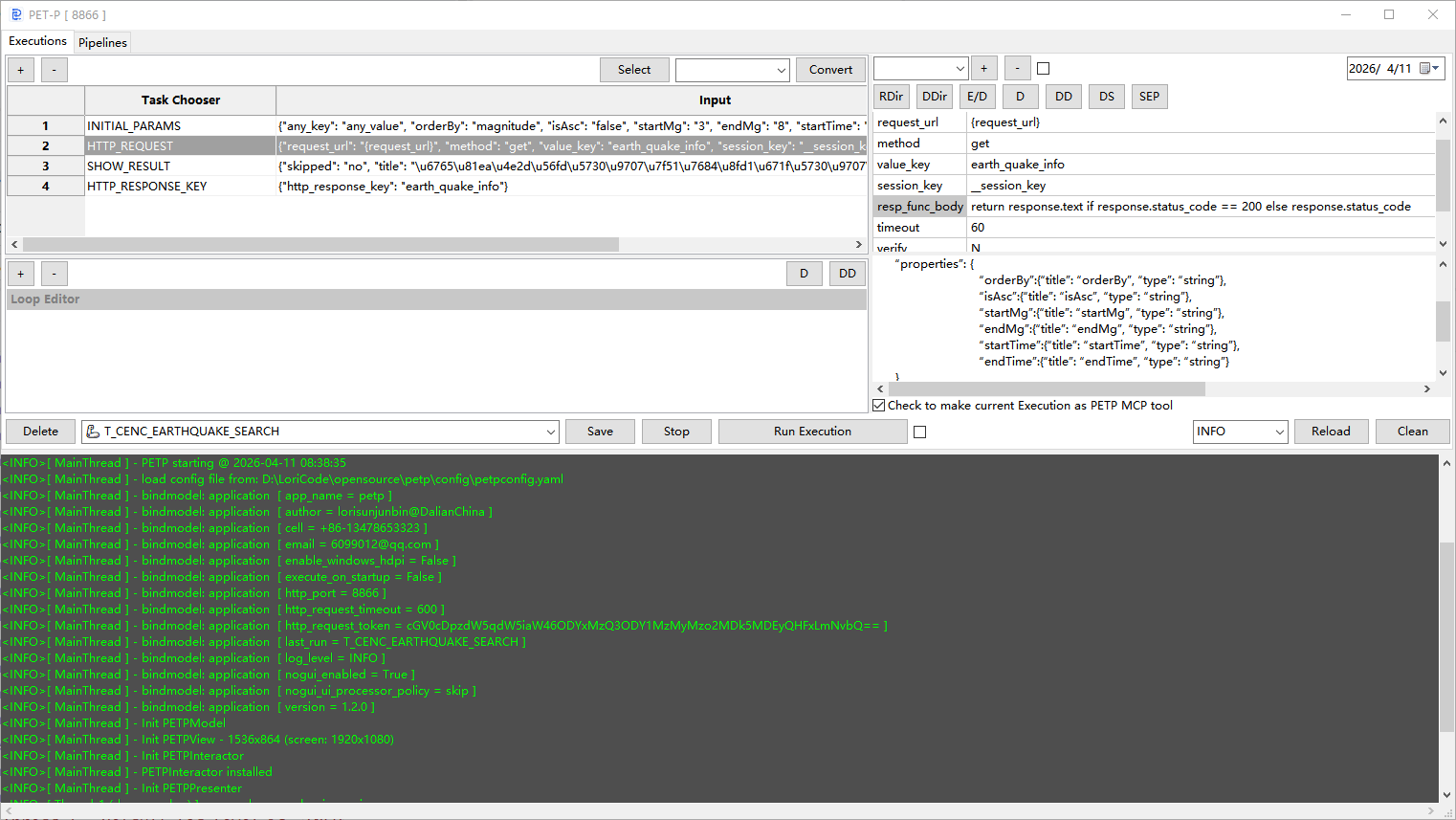

MCP Tool Server · Task Orchestration · LLM-Native

PETP is a pipeline execution runtime that exposes every automation as a typed MCP tool. AI agents can discover, invoke, and chain your real business operations — while processors inside each task can call LLMs themselves for intelligent decision-making.

Chain any mix of processors in a single execution. Branch on LLM output, loop over lists, retry on error — all in YAML, no code required.

Any task step can invoke an LLM processor: summarise, classify, generate, or decide. Results flow as variables into downstream steps.

Package a curated set of executions for a specific domain — procurement, finance, DevOps, HR — and expose them as a focused MCP tool-set.

Run a personal assistant on your laptop, or deploy enterprise tool-sets on Docker. Same engine, same YAML, different scale.

Download sourcing request documents, merge contracts by creation date, classify by type using LLM, then send a summary email — all triggered by a single Claude message.

Pull data from multiple spreadsheets via SSH/SFTP, merge and pivot, call an LLM to write the executive summary section, and export as a final Excel report.

Expose CI pipeline triggers, deployment scripts, log fetchers, and health-checks as MCP tools. Let Copilot or a chat agent coordinate complex release workflows.

A lightweight personal MCP tool-set on your MacBook: web scraping, local file management, calendar data extraction, and LLM-powered daily brief generation.

Define executions in YAML, start the MCP server, and any LLM agent can immediately discover and orchestrate your real-world workflows. No SDK. No boilerplate.